Quantum computing in 2025 feels like one of those moments where the hype cycle is louder than ever before, but the reality behind it tells a more grounded story. Everywhere you look, someone is discussing breakthroughs. They mention qubit explosions. They envision a future where Shor’s and Grover’s algorithms completely rewrite what computers can do. And while that future absolutely exists, it’s not evenly distributed yet. The systems we have today still sit firmly inside the NISQ era “Noisy Intermediate-Scale Quantum” where quantum computers are powerful in theory but noisy and fragile in practice.

The gap between theoretical potential and hardware reality is still wide. Yes, we have algorithms capable of factoring massive numbers or searching unsorted databases faster than classical machines. But the hardware running them is still dealing with short coherence times, low fidelity, environmental noise, and the enormous difficulty of scaling qubits without losing accuracy. 2025 is not the year of full-blown quantum supremacy for useful tasks. However, it is the year when this field finally stabilizes and matures. It starts pointing toward logical qubits, realistic roadmaps, and real clarity about what’s coming next.

Quantum Computing 2025 and the Modern Quantum Landscape

Welcome to the NISQ’s era. This is a stage where quantum computers have tens to thousands of physical qubits. However, they still can’t reliably maintain information long enough to run deep, complex algorithms. Noise overwhelms the signal. That’s why the focus in 2025 shifts from raw qubit numbers to something much more important like stability, error correction, and the transition from physical qubits to logical qubits.

This article dives deep into the current physical limits of quantum hardware. It explores the diversity of architectures powering this ecosystem. The focus is also on the near-term algorithms that actually work on noisy machines. These include tools like VQE (Variational Quantum Eigensolver) and QAOA (Quantum Approximate Optimization Algorithm). These hybrid approaches pair classical and quantum strengths, giving us the first glimpse of practical, real-world use cases.

A basic concept for every reader: Not all qubits are equal.

- Physical qubits are the raw hardware.

- Logical qubits are the error-corrected, reliable qubits that matter for real computation.

Think of physical qubits as individual soldiers, and logical qubits as fully trained units — fewer in number, but far more capable.

The State of Quantum Hardware in 2025

1. The Qubit Count Race

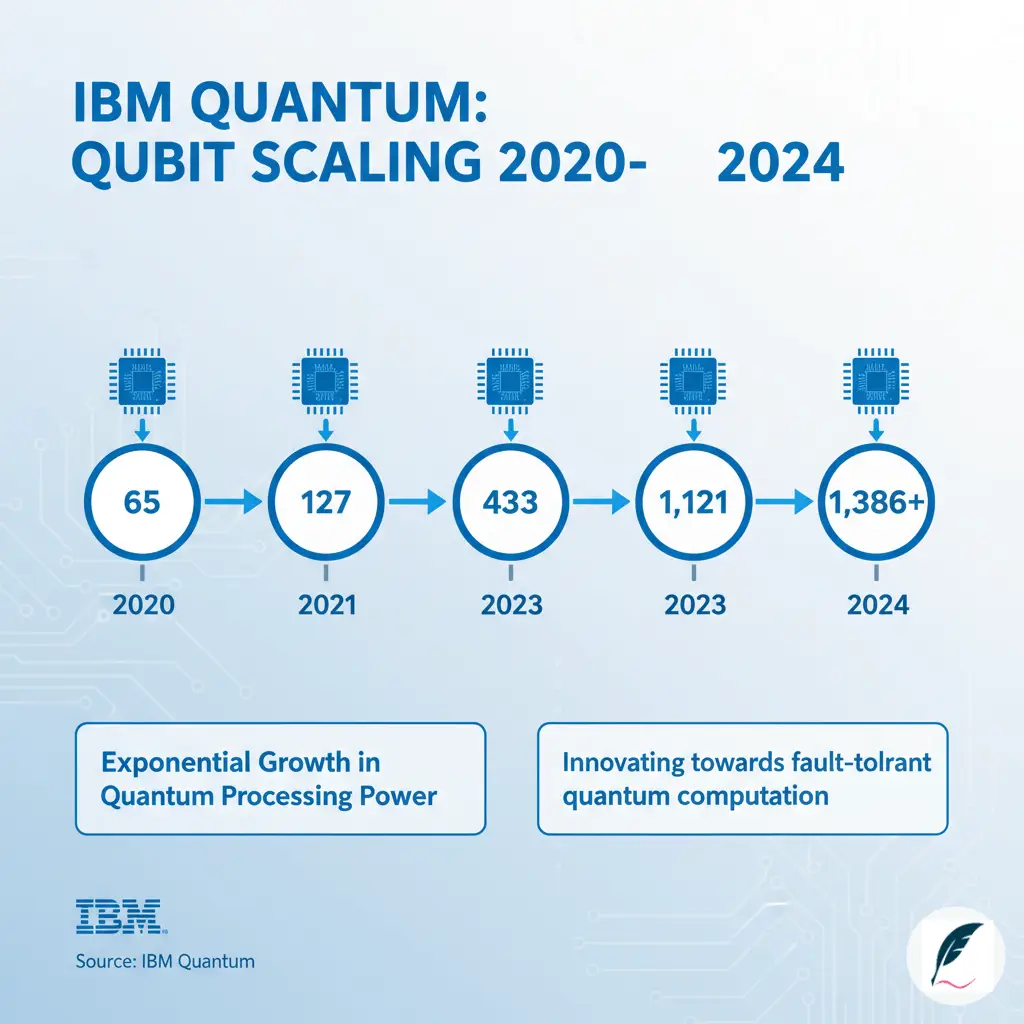

In 2025, tech companies are racing hard to build bigger and more accurate systems, each pushing its own hardware platform to take the lead.

IBM:

IBM continues to push superconducting qubit systems to massive scales. Their recent processors, including the IBM Condor line with over 1121+ qubits, showcase impressive expansion. These chips show density, control, and fabrication maturity, but still suffer from coherence limitations that limit deep circuits.

Atom Computing (Neutral Atoms):

Neutral atom quantum computing has become a genuine competitor. Atom Computing recently unveiled systems with around 1180 physical qubits, powered by highly scalable optical lattices. Density and connectivity remain challenges. However, these machines offer promising coherence times. They also provide stability, which are two things NISQ devices are desperate for.

D-Wave (Quantum Annealing):

D-Wave stands in its own category. Their quantum annealers ship with 5000+ qubits, an unpredictible number compared to gate-based quantum computers. But these qubits are specialized for optimization problems rather than universal quantum logic. A simple comparison table here helps clarify their role vs. gate-based QPUs.

Logical Qubits and Fault Tolerance

The real frontier of quantum computing isn’t the qubit count. It’s logical qubits, qubits protected by quantum error correction.

Why does this matter?

Because physical qubits are extremely fragile. A single logical qubit may require thousands of physical qubits. Logical qubits are the foundation for reliable, scalable, fault-tolerant quantum computing.

- Quantinuum has made remarkable progress with logical qubit demonstrations. They have used trapped ions to achieve error rates low enough to simulate quantum circuits beyond classical ability.

- Atom Computing has reported early success with 24 logical qubits — a significant milestone considering the field’s overall youth.

The Surface Code dominates current error-correction research. It is powerful, but expensive: one logical qubit can require up to a million physical qubits for some large-scale algorithms like factoring RSA-2048.

2. Major Qubit Modalities and Their Trade-offs

Quantum computing in 2025 thrives because it uses many different approaches. Each modality brings its own advantages and limitations, and together they push the field forward.

| Modality | Key Players | Pros | Cons |

|---|---|---|---|

| Superconducting | IBM, Google | Fast gate speeds, established fabrication processes. | Extremely fragile (short coherence time), requires stringent mK cooling. |

| Trapped-Ion | IonQ, Quantinuum | Highest gate fidelity (accuracy), all-to-all connectivity possible, longer coherence times. | Slower gate speeds, challenges in scaling and ion shuttling. |

| Neutral Atom | QuEra, Atom Computing | Highly scalable, intrinsically low decoherence properties. | Complexity in single-atom addressing and control; connectivity still developing. |

| Photonic | PsiQuantum, Quandela | Operates at room temperature, easily integrated with fiber optics. | Non-deterministic quantum gates, measurement loss challenges. |

Example: Quantinuum’s H2-1: Trapped-ion systems—like Quantinuum’s H2-1, which uses 56 fully connected qubits—focus on quality and strong qubit links rather than huge numbers. Because each qubit works with high accuracy and can interact with any other qubit, a 56-qubit trapped-ion machine can take on problems that would push even the world’s best classical supercomputers to their limits. It shows how, in quantum computing, better qubits can matter more than simply having more of them.

Benchmarking and Metrics: Defining “Useful” Quantum Computing in 2025

The physical qubit count is a poor measure of a QPU’s overall capability. To truly define “useful” quantum computing, we must look deeper into performance metrics.

1. Beyond the Qubit Count

- Fidelity (Gate Accuracy): This is arguably the most crucial metric. Fidelity measures the probability that a quantum operation (a gate) is executed without error. For true, scalable fault-tolerance using the Surface Code, experts agree that gate fidelity must consistently exceed 99.99%—a threshold few devices have reliably achieved across their entire operational set.

- Coherence Time: This metric measures the duration a qubit can maintain its delicate quantum state. It includes superposition and entanglement. Environmental noise causes it to lose its quantum information, leading to decoherence. Longer coherence times allow for deeper, more complex quantum circuits.

- Quantum Volume (QV) and Algorithmic Qubits (AQ): IBM developed Quantum Volume. Honeywell (now Quantinuum) developed Algorithmic Qubits. These synthetic metrics attempt to capture the overall “usefulness” of a QPU. They combine the machine’s capacity (width), its maximum circuit depth, and its error rate. They provide a standardized way to compare machines built on different technologies.

2. The Quantum Supremacy vs. Quantum Advantage Debate

These two terms are often confused, but the distinction is critical for setting realistic expectations in the NISQ era.

- Quantum Supremacy: This was achieved in 2019 by Google’s Sycamore processor. It is defined as a machine performing a specific, highly technical, and often non-useful task faster than the world’s best classical supercomputer. The task itself is arbitrary—it is a proof-of-concept benchmark, not a commercial tool.

- Quantum Advantage: This is the ultimate, commercially viable goal. Quantum Advantage is achieved when a quantum computer performs a commercially or scientifically useful task. This includes tasks like discovering a new catalyst or optimizing global logistics. It does so faster, cheaper, or more accurately than any classical computer can.

Current consensus in 2025 is clear: we remain in the NISQ era, demonstrating supremacy in niche tasks while still awaiting the advent of true, tangible Quantum Advantage in commercially relevant applications.

Algorithms and Applications for the NISQ Era in Quantum Computing 2025

Because current QPUs are noisy, they cannot run the deep, complex circuits required by algorithms like Shor’s. Instead, research in 2025 is centered on hybrid solutions that mitigate the effects of high error rates.

Hybrid Quantum-Classical Algorithms

NISQ-era quantum computers cannot run purely quantum algorithms reliably. The hardware is too noisy, and circuits are too shallow. Instead, practical near-term applications rely on hybrid approaches that cleverly partition problems between quantum and classical resources.

These hybrid algorithms represent a pragmatic compromise. They exploit quantum computers’ strengths. These include representing quantum states naturally and exploring superposition spaces. Classical computers are used for tasks they excel at, like optimization and data processing.

Variational Quantum Eigensolver (VQE)

VQE targets one of quantum computing’s most promising applications: quantum chemistry. Understanding molecular behavior requires solving the Schrödinger equation, but this task scales exponentially with system size on classical computers.

Purpose: VQE estimates the ground-state energy of molecules – the lowest energy configuration that determines chemical stability and reactivity. This information is crucial for understanding chemical reactions, designing new catalysts, developing better pharmaceuticals, and creating advanced materials.

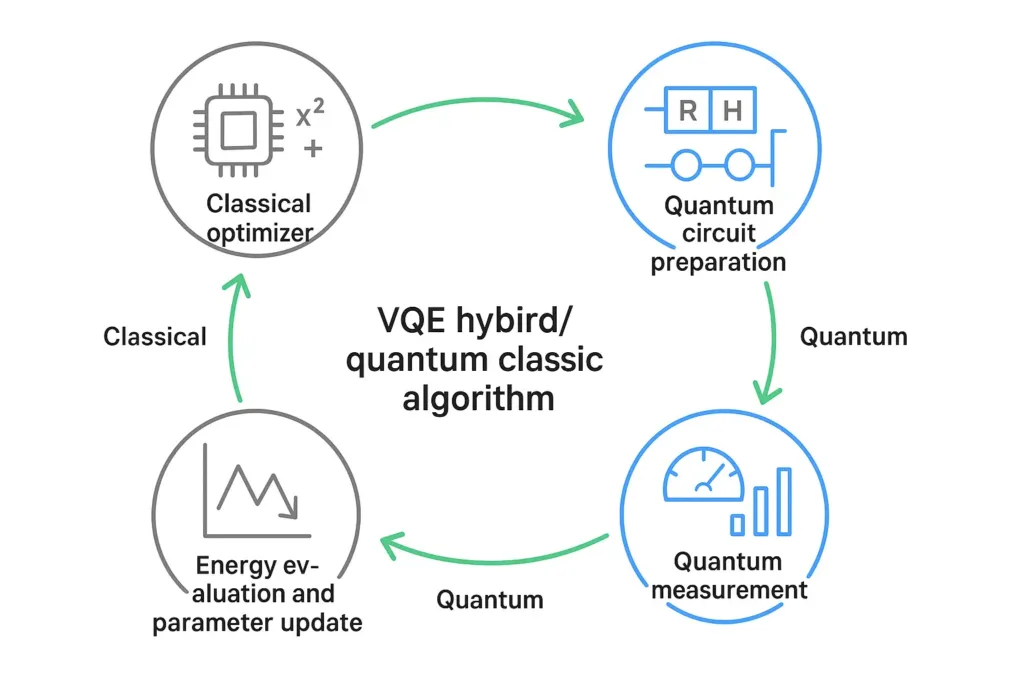

Hybrid Nature: VQE uses quantum circuits to prepare trial quantum states representing molecular configurations. The quantum processor prepares these states using parameterized gates that can be adjusted. The system then measures the energy of these states through quantum measurements.

Classical optimization algorithms analyze these measurements and adjust the quantum circuit parameters to minimize the measured energy. The process iterates between quantum preparation/measurement and classical optimization until convergence on the lowest energy state.

This hybrid approach compensates for NISQ hardware limitations. Since each quantum circuit is relatively shallow (few layers of gates), errors don’t accumulate catastrophically. The classical optimizer can tolerate some noise in the quantum measurements, averaging over multiple runs to extract meaningful information.

Quantum Approximate Optimization Algorithm (QAOA)

QAOA tackles combinatorial optimization – finding the best solution from a finite but enormous set of possibilities. These problems appear everywhere in industry and research.

Purpose: QAOA seeks approximate solutions to NP-hard optimization problems. While it doesn’t guarantee finding optimal solutions, it can potentially find good solutions faster than classical heuristics for certain problem classes. Applications include scheduling, logistics routing, portfolio optimization, circuit design, and graph problems.

Mechanism: QAOA encodes optimization problems into quantum circuits with adjustable parameters. The algorithm alternates between two types of operations. The first type is problem-specific operations that encode the cost function being optimized. The second type is mixing operations that allow the system to explore different solutions through quantum tunneling and interference.

Like VQE, QAOA alternates between quantum circuit execution and classical parameter optimization. The quantum circuit explores the solution space through quantum superposition and interference, potentially finding better solutions than classical random sampling. The classical optimizer adjusts circuit parameters based on measurement results.

QAOA’s practical performance remains debated within the quantum computing community. For many optimization problems, highly optimized classical algorithms – particularly specialized heuristics and modern machine learning approaches – remain competitive or superior to current NISQ implementations of QAOA. The algorithm shows promise but hasn’t yet demonstrated clear advantages on practically relevant problems.

However, as quantum hardware improves and researchers better understand which problem structures favor QAOA, quantum speedups may materialize for specific optimization classes. The algorithm represents an active research frontier with applications being explored by companies across industries.

Key Application Areas and Current Results in Quantum Computing 2025

1. Quantum Chemistry and Materials Science

Simulating molecules and materials represents quantum computing’s most mature potential application. The quantum nature of chemical bonds – electrons existing in superposition, molecular orbitals entangling across atoms – maps naturally onto quantum computers.

Understanding molecular behavior enables transformative applications. Designing better catalysts could improve industrial chemical production efficiency and reduce environmental impact. Simulating battery materials could lead to higher energy density storage, advancing electric vehicles and renewable energy. Modeling drug molecules binding to biological targets could accelerate pharmaceutical development and personalize medicine.

Current achievements include:

- Simulating catalysts for the Haber-Bosch process (ammonia production)

- Modeling lithium-ion and solid-state battery materials

- Exploring drug binding to protein targets for pharmaceutical development

- Investigating photovoltaic materials for more efficient solar panels

Companies like AstraZeneca, BMW, Daimler, and JPMorgan Chase have partnered with quantum computing providers to explore these applications. In 2025, quantum chemistry demonstrations grew more sophisticated. IonQ, AstraZeneca, AWS, and NVIDIA collaborated on modeling chemical reactions relevant to drug development, though these remain proof-of-concept rather than production applications.

The challenge is scale. Current quantum computers can simulate molecules with a dozen or two atoms. Many commercially interesting molecules – drug candidates, complex catalysts, large organic molecules – contain hundreds of atoms. Reaching that scale requires fault-tolerant quantum computers with hundreds or thousands of logical qubits, likely arriving in the late 2020s or early 2030s.

2. Finance

Financial institutions are exploring quantum computing for several applications, driven by the computationally intensive nature of modern finance.

Portfolio optimization: Finding the best combination of investments to maximize returns while managing risk involves solving complex optimization problems with several constraints. As portfolios grow and constraints multiply, classical optimization becomes computationally expensive. QAOA and quantum annealing approaches have been applied to simplified portfolio optimization problems. However, real-world portfolio optimization involves continuous variables, transaction costs, and regulatory constraints that complicate quantum implementations.

Risk analysis: Monte Carlo simulations, widely used in finance to model uncertainty in pricing derivatives and assessing risk, might benefit from quantum amplitude estimation algorithms. These could potentially provide quadratic speedups for certain sampling problems. However, implementing these algorithms on NISQ hardware faces significant challenges from noise and limited circuit depth.

Fraud detection: Quantum machine learning algorithms could potentially identify patterns in transaction data more effectively than classical approaches. Financial institutions process enormous transaction volumes daily, and even modest improvements in fraud detection accuracy could save millions of dollars. However, current quantum machine learning remains mostly theoretical, with practical implementations still limited.

Major banks including JPMorgan Chase, Wells Fargo, BBVA, and Standard Chartered have established quantum computing research programs. However, as of 2025, these remain primarily exploratory. No financial institution has deployed quantum computers for production operations. The focus is on algorithm development, understanding which financial problems might benefit from quantum approaches, and building expertise for when hardware matures.

3. Machine Learning

Hybrid quantum machine learning symbolizes an intriguing but controversial frontier. Researchers are investigating if quantum processors can enhance classical machine learning pipelines. They may offer speedups or capabilities that classical systems can’t match.

Approaches include:

- Using quantum circuits as feature mapping layers in neural networks

- Quantum kernel methods for pattern recognition and classification

- Variational quantum classifiers for specific data types

- Quantum-enhanced optimization for training classical models

The fundamental question remains contentious: for which machine learning tasks, if any, do quantum computers offer advantages over rapidly improving classical AI accelerators like GPUs and TPUs? Classical machine learning hardware has benefited from massive investment and optimization. Neural networks run extraordinarily efficiently on modern accelerators.

In 2025, several proof-of-concept demonstrations showed quantum machine learning working on small datasets. Researchers explored using quantum processors for specific tasks within larger AI systems. However, practical advantages remain elusive. Most quantum machine learning demonstrations can be matched or exceeded by classical approaches on today’s hardware.

The answer likely depends on specific data structures and problem characteristics. Quantum systems might offer advantages with data that has an inherent quantum structure. This includes quantum sensing data and molecular data. They might also be beneficial for problems involving searches through exponentially large spaces. In such cases, quantum interference provides benefits. Classical machine learning excels at pattern recognition in high-dimensional but ultimately classical data.

As quantum hardware improves and researchers better understand which problem structures favor quantum approaches, quantum machine learning may find niches. However, dramatic quantum speedups for general machine learning appear unlikely based on current theoretical understanding. The field continues as an active research area with more questions than answers.

The Roadmap to Fault Tolerance

The path to achieving Quantum Advantage hinges entirely on conquering errors and building reliable logical qubits.

1. The Error Correction Imperative

The greatest challenge in quantum computing is the inherent fragility of the qubit. Environmental noise destroys the quantum state through decoherence almost instantly. This is why error correction is not optional; it is the absolute imperative for scaling.

- Encoding the Logical Qubit: In classical computing, a bit flip is easily fixed. However, quantum errors, such as bit flips and phase flips, must be fixed without measuring the qubit. Measuring the qubit would collapse its superposition. Quantum error correction works by encoding a single useful logical qubit into a redundant system of many physical qubits.

- The Resource Overhead: The engineering requirement for this is staggering. A commonly cited benchmark for running a cryptographically significant task is factoring an RSA-2048 key. This task requires millions of high-fidelity logical operations. It would demand potentially millions of physical qubits to sustain the necessary error correction code.

2. Industry Roadmaps and Timelines

The major players have ambitious, multi-year plans centered on hitting error correction milestones.

- IBM’s Roadmap: IBM has set aggressive targets focused on scaling. They plan to show increasingly complex, larger QPU chips. Additionally, IBM aims to improve fidelity year over year. Their focus is on a modular architecture. This design allows multiple chiplets to be connected. It is the key to reaching millions of physical qubits.

- IonQ/Quantinuum’s Focus: These companies prioritize demonstrating complex, high-fidelity logical operations. These operations can run non-simulable quantum algorithms. They focus less on raw qubit count. Their emphasis is on increasing the Quantum Volume of their devices.

A realistic prediction for achieving genuine, broadly applicable Quantum Advantage (i.e., when a quantum computer becomes a necessary tool for solving real-world, industry problems) remains cautious. Small-scale demonstrations may appear sooner. However, experts agree that full-scale, useful quantum computing will likely emerge in the 2030s or later. This requires large, fault-tolerant logical systems.

Conclusion

2025 marks a stability year — a moment where architectures unify, logical qubit demonstrations mature, and scaling becomes more deliberate. We’re not at practical quantum advantage yet, but we have a clearer roadmap than ever. The quantum computing 2025 landscape is defined by accuracy, coherence improvements, and a realistic shift toward error correction.

If you’re building a career in this field — this is your moment. If there’s one skill to learn now, it’s how to write quantum circuits, understand noise, and design NISQ algorithms. The inflection point will come fast, and those who prepare early will be the ones shaping the next era of computing.

This is the perfect time to explore toolkits like Qiskit, Cirq, and PennyLane, learn the physics behind coherence and fidelity, and prepare to contribute to a global shift in computation.

Recommended Resources for Curious Minds

If you are eager to deepen your understanding of the technical details, algorithms, and practical applications defining the Quantum Computing 2025 landscape, these resources are highly recommended:

- Quantum Computing Since Democritus by Scott Aaronson

- Quantum Computation and Quantum Information by Nielsen & Chuang

- The Age of AI: And Our Human Future by Henry A Kissinger

- An Introduction to Quantum Computing with Qiskit by Anya Bindra